About

Software engineer.

I've built and shipped backend services, APIs, and frontend features across production environments.

More recently, I've been focused on using AI to improve developer workflows and automate parts of the development process.

I'm interested in building tools that make complex or messy behavior easier to understand and work with.

Experience

Where I've worked

Software Developer

Fusion92

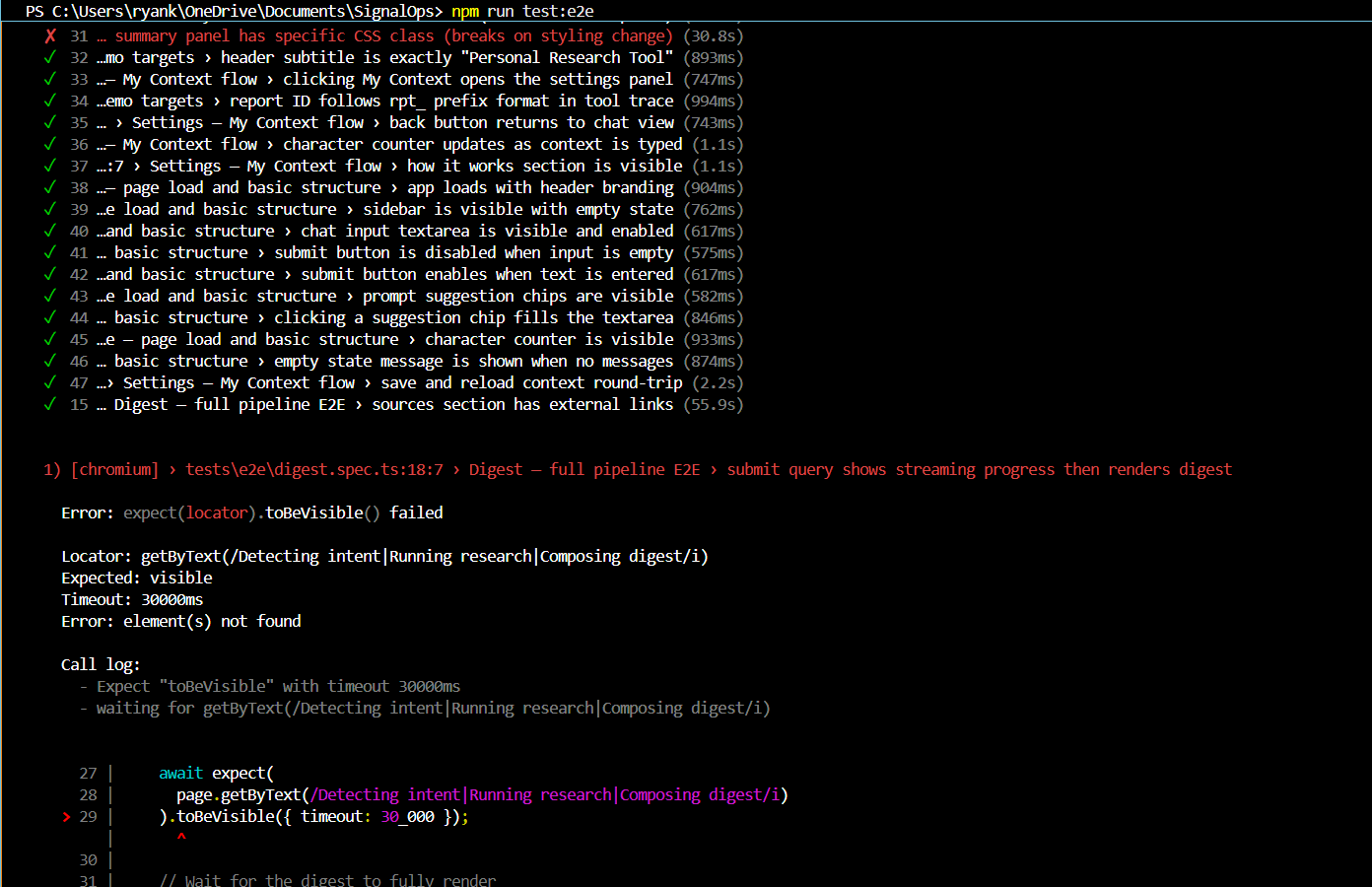

- →Built an AI-powered E2E test failure analyzer for a 140+ test Playwright suite — combining GPT-4o vision for screenshot analysis with a LangChain tool-calling agent to automate root cause investigation.

- →Reduced manual debugging time from ~2 hours to under 5 minutes per run via structured, categorized failure attribution reports (test vs. application fault).

- →Shipped a multi-tenant Chatbase AI chatbot integration within CoreCMS with tenant-safe isolation, secure secret storage, and HMAC-based session validation.

- →Implemented an admin provisioning utility and agent-guided onboarding tour (Shepherd.js) with CMS-managed tooltips and restart/skip controls.

Full Stack Developer Intern

Fusion92

- →Led redesign of the Platform Management page in CoreCMS using Vue 3 components, improving usability and UI structure.

- →Collaborated cross-functionally with product and client teams in an Agile/Jira environment to scope and ship features.

- →Validated APIs via Swagger and supported QA and release processes through Azure DevOps pipelines.

Recent Project

FailChain

End-to-end test failure analysis tool. Parses a test run, groups failures by error signature, and generates a structured report with root cause, fault classification, and recommendations.

Before

Playwright E2E run, test failure with no root cause context

Failure attribution

Debug output

Agent reasoning

Flaky test detection

Architecture

Key details

- →Framework-agnostic — designed to work across E2E testing frameworks without coupling to any one tool

- →Vision model analysis per failure group + LangChain tool-calling agent for root cause investigation

- →Static pre-analysis pass runs before the LLM — catches obvious issues deterministically and cheaply

- →Classifies failures as test fault, application regression, or flaky — per run

- →Failure history tracked across runs to surface recurring flakiness patterns

- →Full step-by-step audit log for every reasoning chain

Skills

Technical foundation

Languages

Frameworks

AI / Systems

Infrastructure

A bit about me

Outside the code

Outside of work I'm usually hooping, out on a trail, or deep in a game. Always building something on the side too.